Data Classification – How to Categorize It, Where to Store It

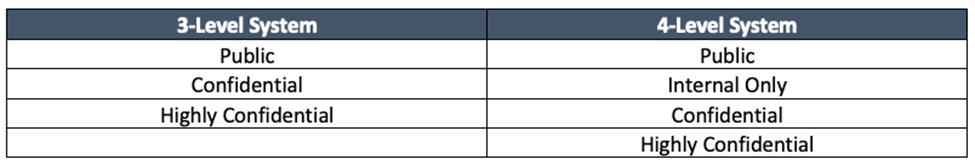

Previously, we discussed the requirements of a mature data classification program. In this post, we are going to review the administrative mechanics of such a program. Data classification, you’ll recall, usually includes a three- or four-layer system akin to the below:

I recommend that organizations new to data classification begin with the three-level system, as these levels and their corresponding actions and controls can be challenging to define. The three-level system considers all internal data confidential.

The priority therefore is to create the processes and procedures needed to support confidential data. You can identify the limited amount of Public and Highly Confidential data later through interviews and technical discovery. Then you can clearly communicate your goals across the business, including locations, processes, and applications.

Today, we are going to cover how data is normally stored in organizations and where. These structures are going to have a huge impact on your program’s scope, operations, and technical decisions. As every organization has different business processes and technologies, each data classification project is going to be different, too.

Structured vs. Unstructured Data

Categorizing structured and unstructured data is the easiest data classification component to explain yet the hardest to manage. Structured data is any data within an application, usually a database. Your organization’s application owners, database administrators, or the application vendor can explain the different types of data stored in the application.

Organizations marvel at how much data and how many data types are stored in applications. HR, customer relationship management (CMR) systems, enterprise resource planning (ERP) platforms, account platforms, and M&A solutions are just a few applications that historically hold huge, structured data stockpiles. Many of these systems are regulated (e.g., HR, ERP) and therefore the data must be kept or retained for a specific amount of time, and in some cases, indefinitely.

“The security and governance capabilities of individual software distributions don’t always meet all the requirements for granular access control and emerging governance requirements.”

– Doug Henschen

Unstructured data is data not stored in an application. Excel spreadsheets, PowerPoint presentations, and Word docs are classic examples of unstructured data. Unstructured data is often found in reports generated from structured data systems.

Unstructured data is usually ten times larger by volume than structured data. The reason for this is simple: Saving copies of important files in several places makes employees feel secure. Email historically accounts for the largest amount of unstructured data in an organization.

Think about it: Employees email an important or sensitive document to ensure everyone has a copy then save the email in a PST file or in a folder on their laptop. There could be hundreds of copies of a single file containing highly sensitive data located in hundreds of locations across the network.

Ominous Approach of Data Lakes and Cloud Solutions

A trend in business today is to seek value in all the structured data that organizations store. Industries from real estate to waste management have discovered hidden value in the data they are collecting. Some may think this trend started in the finance industry, but they would be wrong.

The rise of data started with the focus on analytics that Google and Facebook pioneered. These and similar organizations realized they could increase profitability and customer stickiness if they targeted their advertising at specific users.

IP addresses, login times, hovering points, and other data provided unique insight into their users that they could sell to a larger pool of advertisers. That information was also useful to other organizations for a host of other reasons. Remember Cambridge Analytica? This new value data provided was made possible by the first-of-their-kind data lakes, though they were not named that at the time.

“The key is the new stuff doesn’t have the benefits that we expected from the old stuff.”

– Merv Adrian

Data lakes provide organizations the unique opportunity to “dump” data for any number of sources and formats. They are usually unmanaged and open to any account with access to the lake. Regardless of the purpose of the lake (marketing, business insights, archiving, etc.), the data classification characteristics are the same. First, it accepts all data. Second, it is an open platform by design. Third, the majority of these solutions are migrating to or being built in the cloud.

Data Warehouse vs. Data Lake

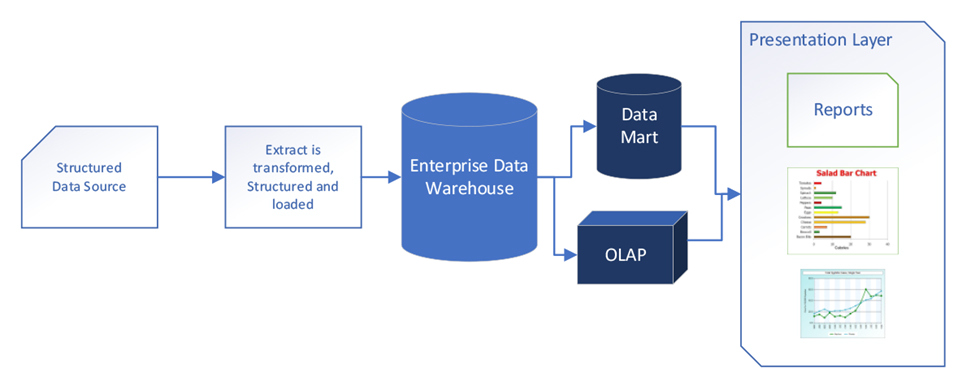

Data warehouses are more secure than lakes. This is because the data entered is cleansed prior to entering. See below:

Data warehouses cleanse data prior to entering the cloud.

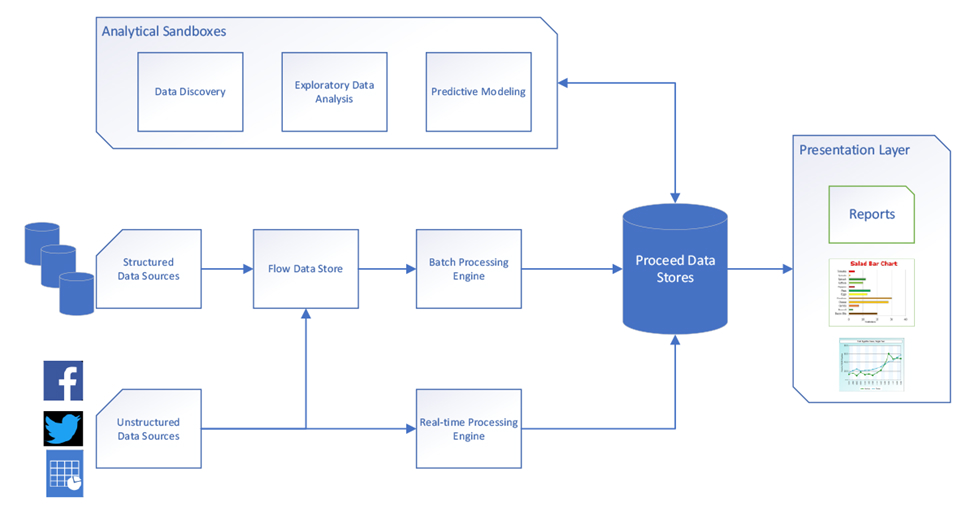

A data lake, on the other hand, takes in ALL data without the step of transformation and restructuring:

A data lake, unlike a data warehouse, takes in ALL data, no questions asked.

You cannot afford to overlook the data classification issues that arise when laking all this data, especially from a regulatory compliance standpoint. You need to be involved in the design of the lake(s) from the start.

Regardless of how the lake is built, data classification needs to be a consideration in its design. For example, a lake is being designed for archival purposes. Should Highly Confidential data be included? Should Highly Confidential data have its own lake, or should it be excluded completely?

Applying classification either at the beginning of the data injection, or at the end when it is being exported from the Process Data Stores, is your best strategy.

Knowing what systems are providing data to the lake is important. When data is put into a lake, there are fewer protections available for the governing groups (Cyber, Risk, Compliance, etc.) compared to enterprise databases or relational database systems.

With traditional database management systems, the information security team might handle all the network security and access control protections but do little with the data once it enters the database management system.

Data lake structures, however, do not come with all of the governance capabilities and policies associated with a traditional database management system, from basic referential integrity to role-based access and separation of duties.

One way to approach data lake security is to think of it as a pipeline with upstream, midstream, and downstream components, according to Merv Adrian. The threat vectors associated with each stage are somewhat different and therefore need to be addressed differently.

Data lakes provide great value to the organization but require a different governance model to maintain classification controls.

Coming Up

In my next post, I will pull all the elements we’ve previously discussed together with the Data Management Controls Matrix.

Frequently Asked Questions

Secure File Transfer is a process that allows the safe and efficient sharing or transferring of files over a network or the internet while ensuring the data’s security and integrity. It employs protocols such as Secure File Transfer Protocol (SFTP), File Transfer Protocol Secure (FTPS), or Hypertext Transfer Protocol Secure (HTTPS) to provide encryption and secure channels for data transfer. This method is crucial in various sectors, including R&D, higher education, and government agencies, where sensitive data needs to be securely shared. Key features of Secure File Transfer include encryption, access controls, and transfer acceleration, facilitating secure and efficient data transfers, even for large or complex files.

Using secure channels for file transfer helps protect data during transmission, ensuring data privacy and integrity. It also provides a record of data transfers, which can be useful for tracking and monitoring purposes, and for demonstrating compliance with data protection regulations.

Managed File Transfer (MFT) is a technology that facilitates secure and efficient data transfer between systems within and across organizations. Unlike standard file transfer protocols like FTP, MFT solutions offer enhanced security and control, utilizing secure protocols such as SFTP, FTPS, and HTTPS for data transmission. They also provide features like automation, scheduling, real-time monitoring, and notifications for file transfer activities, enabling organizations to manage the data transfer process more effectively. MFT is particularly beneficial in industries that regularly transfer large volumes of sensitive data, such as finance, healthcare, and retail, ensuring compliance with various data security and privacy regulations.

Secure File Transfer Protocol (SFTP) is a network protocol that offers file access, transfer, and management functionalities over any reliable data stream, typically used with the SSH-2 protocol to provide secure file transfer. SFTP encrypts both commands and data, preventing the open transmission of passwords and sensitive information over the network, thus ensuring data integrity and privacy. Compared to standard FTP, SFTP supports more robust features such as resuming interrupted transfers, directory listings, and remote file removal, making it a versatile tool for managing secure file transfers. In the context of Secure File Transfer solutions, SFTP, when combined with encryption, access controls, and transfer acceleration, enables efficient and secure data transfers, even for large files or complex datasets.

Kiteworks offers features like AES 128-bit encryption, secure email, and a secure container for offline access. It also provides comprehensive, immutable audit logs and integrates with existing security infrastructure, making it a robust solution for Secure File Transfer. Kiteworks supports the transfer of large files up to 16 TB in size and provides native mobile apps for Android and iOS with features like secure email, file sharing, and offline access. Administrators can create secure forms for compliant file uploads, and the platform allows for customization of branding, appearance, and text.

Additional Resources